Cannot open this file because of an error.: Template Url must be an Amazon S3 URL.

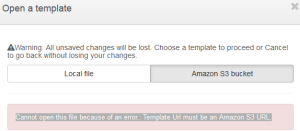

Are you attempting to open a Cloudformation Template in Cloud Designer from an S3 bucket and receiving the following error:

Cannot open this file because of an error.: Template Url must be an Amazon S3 URL.

Well you’re not alone. This even happens to me if I’ve been through the process of creating the template via the designer, created a stack from it, where by the template is stored automatically for me in the bucket, and then I go back into the designer and try to open it using the S3 URL.

The short story is this:

- Create your template files in a bucket that is in the same Region as where you are forming

- Ensure the template file name has no spaces in it (underscores are acceptable) (this is what caught me)

- Ensure the S3 URL is less than 1024 chars

The long story is:

http://docs.aws.amazon.com/AWSCloudFormation/latest/UserGuide/cfn-using-console-create-stack-template.html mentions:

- Specify an Amazon S3 template URLSpecify a URL to a template in an Amazon S3 bucket.If you have a template in a versioning-enabled bucket, you can specify a specific version of the template, such as

https://s3.amazonaws.com/templates/myTemplate.template?versionId=123ab1cdeKdOW5IH4GAcYbEngcpTJTDW. For more information, see Managing Objects in a Versioning-Enabled Bucket in the Amazon Simple Storage Service Console User Guide.The URL must point to a template (max size: 460,800 bytes) in an Amazon S3 bucket that you have read permissions to, located in the same region as the stack. The URL itself can be, at most, 1024 characters long.

So point one. Make sure your Bucket is in the same Region you’re trying to form in.

I obtain my URL by going to the S3 bucket and getting the properties of the file. Then I copy the LINK part. I end up with something like this:

https://s3-ap-southeast-2.amazonaws.com/cf-templates-16gvle5og07pw-ap-southeast-2/2016018eT7-designer/Spark+RA+Test.txtf4nrgijpmz

Looks good to me. FYI this file was auto created by the designer when I saved it to the S3 bucket. So not a manual upload.

Further the information here http://docs.aws.amazon.com/AmazonS3/latest/dev/UsingBucket.html states:

Accessing a Bucket

You can access your bucket using the Amazon S3 console. Using the console UI, you can perform almost all bucket operations without having to write any code.

If you access a bucket programmatically, note that Amazon S3 supports RESTful architecture in which your buckets and objects are resources, each with a resource URI that uniquely identify the resource.

Amazon S3 supports both virtual-hosted–style and path-style URLs to access a bucket.

- In a virtual-hosted–style URL, the bucket name is part of the domain name in the URL. For example:

http://bucket.s3.amazonaws.comhttp://.bucket.s3-aws-region.amazonaws.com

In a virtual-hosted–style URL, you can use either of these endpoints. If you make a request to the

http://endpoint, the DNS has sufficient information to route your request directly to the region where your bucket resides.bucket.s3.amazonaws.com - In a path-style URL, the bucket name is not part of the domain (unless you use a region-specific endpoint). For example:

- US East (N. Virginia) region endpoint,

http://s3.amazonaws.com/bucket - Region-specific endpoint,

http://s3-aws-region.amazonaws.com/bucket

In a path-style URL, the endpoint you use must match the region in which the bucket resides. For example, if your bucket is in the South America (Sao Paulo) region, you must use the

http://s3-sa-east-1.amazonaws.com/bucketendpoint. If your bucket is in the US East (N. Virginia) region, you must use thehttp://s3.amazonaws.com/bucketendpoint. - US East (N. Virginia) region endpoint,

My URL FAILS!

When I view the permissions on the file for some reason it does not have my IAM User account on it but someone elses. I note all buckets in fact have the same owner and all files inherit this.

This is explained here: http://docs.aws.amazon.com/AmazonS3/latest/dev/UsingBucket.html

The AWS account that creates a resource owns that resource. For example, if you create an IAM user in your AWS account and grant the user permission to create a bucket, the user can create a bucket. But the user does not own the bucket; the AWS account to which the user belongs owns the bucket.

So this is normal behaviour.

I’ve tried adding the Grantee of Everyone with View/Download permissions which stuck but this didn’t help.

If I right click the file and select Make Public I’m still not able to open it in the designer. Yes it was made public correctly. I am now able to click the link in the S3 interface and am prompted to download the file as opposed to getting an XML Access Denied message. So I can download this file locally and use the Local File option if I want however I don’t.

Ok so getting desperate I added Static Web Hosting to the S3 bucket. This gave me a URL of: http://paulsconfigfiles.s3-website-ap-southeast-2.amazonaws.com/Spark+RA+Test.txt for my template file.

Now when I use this in the browser I get the following error:

403 Forbidden

- Code: AccessDenied

- Message: Access Denied

- RequestId: 213E1C4F0506E2A3

- HostId: jd+eguzjIfnQqrfLvT+HjXLE+LYzskdVkfeoXan/u0s8UsNOUqWaW4+em7qD2qHgxFOY4f5kHZg=

An Error Occurred While Attempting to Retrieve a Custom Error Document

-

Code: AccessDenied

-

Message: Access Denied

And I still can’t open it using the Designer. Joy.

In desperation I went into the designer, created a blank design, added in a single entity I didn’t care what and saved it to S3.

I found it in my regions automatically created S3 bucket for cloudformation just fine.

I copied the link and re-opened it in Designer and it WORKED.

WTF.

I tried to open another one that existed already and it FAILED.

Clicking on the new one I just created from S3 resulted in an access denied error however:

<Error><Code>AccessDenied</Code><Message>Access Denied</Message><RequestId>F8C491805CB6F710</RequestId><HostId>85RD++JWfQ8S596287CDqMWBmArvuS55GkjQU/VBZlEYYE/GH+CaZoDyRyFLHhFpR0gh47PoROE=</HostId></Error>

At this point dismay is setting in.

This shouldn’t be this hard.

So even though I get the AccessDenied I can still open my newly created one in the Designer. Interesting yet not helpful. The permissions are exactly the same. The inline policy on both are empty.

So I deleted my S3 bucket and started again.

Creating only files from the designer has resulted in mixed success.

I have some that open and some that don’t after saving via the designer.

It turns out the FILENAME has a part to play and changes the resulting link. The max size the S3 URL can be is 1024 chars.

https://s3.amazonaws.com/cf-templates-16gvle5og07pw-us-east-1/2016018Th3-PaulsVirginTemplater8cez55dn9

These two are well below 1024 chars. The difference is I used a space in the name of the first one. First one is 101 chars, second is 102 chars.

If I rename the first file to have no spaces it works when opening the new resulting link in the designer.

W.T.F AWS! 😛